vRealise Automation 8 has the ability to manage many things. One thing is to Kubernates clusters. Out of the box VRA is able to create Tanzu Grid Kubernates cluster. However you can also use it to manage external K8s clusters. This is going to be a quick blog on how to add non-managed cluster into VRA and do deployments.

Today I am going to use vRA to deploy a new pod into an Azure Kubernetes Services (AKS) Cluster using a Cloud Assembily blueprint.

To add a cluster as a consumable resource we require three things:

- K8s API URL

- CA Cert for the K8s Cluster

- Either a bearer token for auth or the client cert and client key for auth

Fortunately all of these can be found in our kube config file. To get the correct data in the kube config we need to first authenticate to the cluster using kubectl. For an AKS cluster we use the az cli:

$az login

$az aks get-credentials -n montytest-publicaks -g test-aks-cluster --admin

NOTE: I am using the --admin option because I have AAD auth enabled for my cluster and I would be required to do an interactive login which will not work with vRA.

Once authenticated we can pull our auth data from our kube config file located in ~/.kube/config.

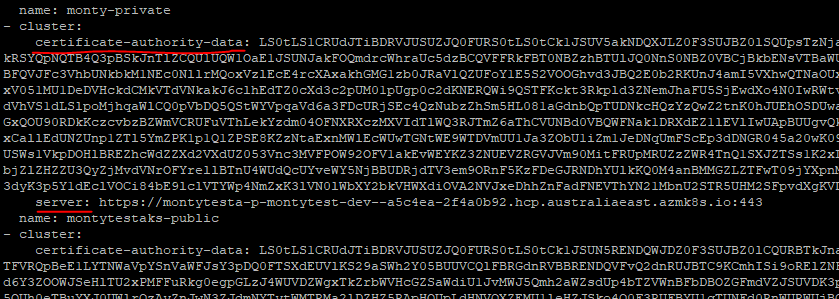

The CA Cert and Server API address is located at the beginning of the config file under the cluster details:

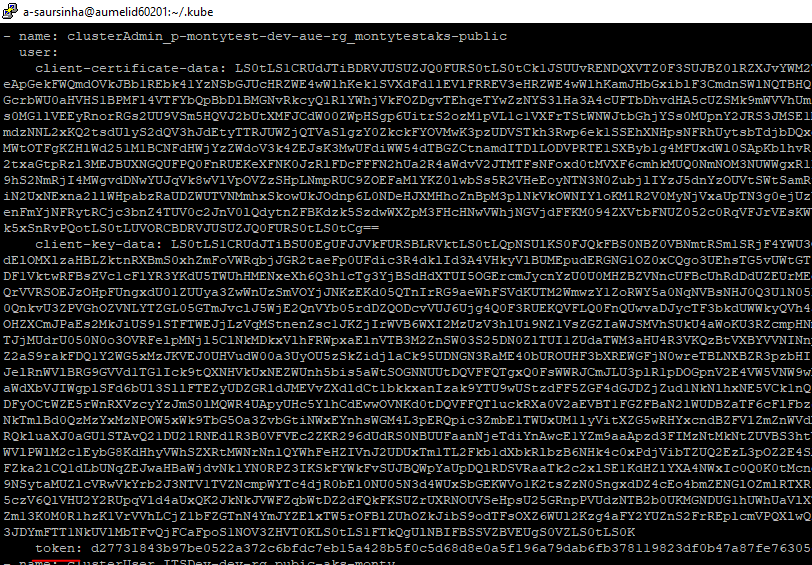

And the User Token:

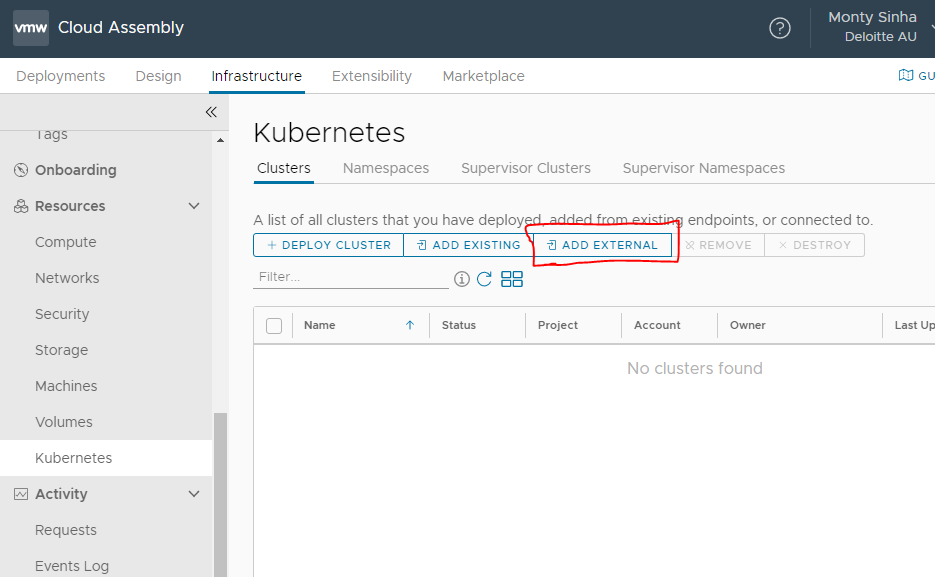

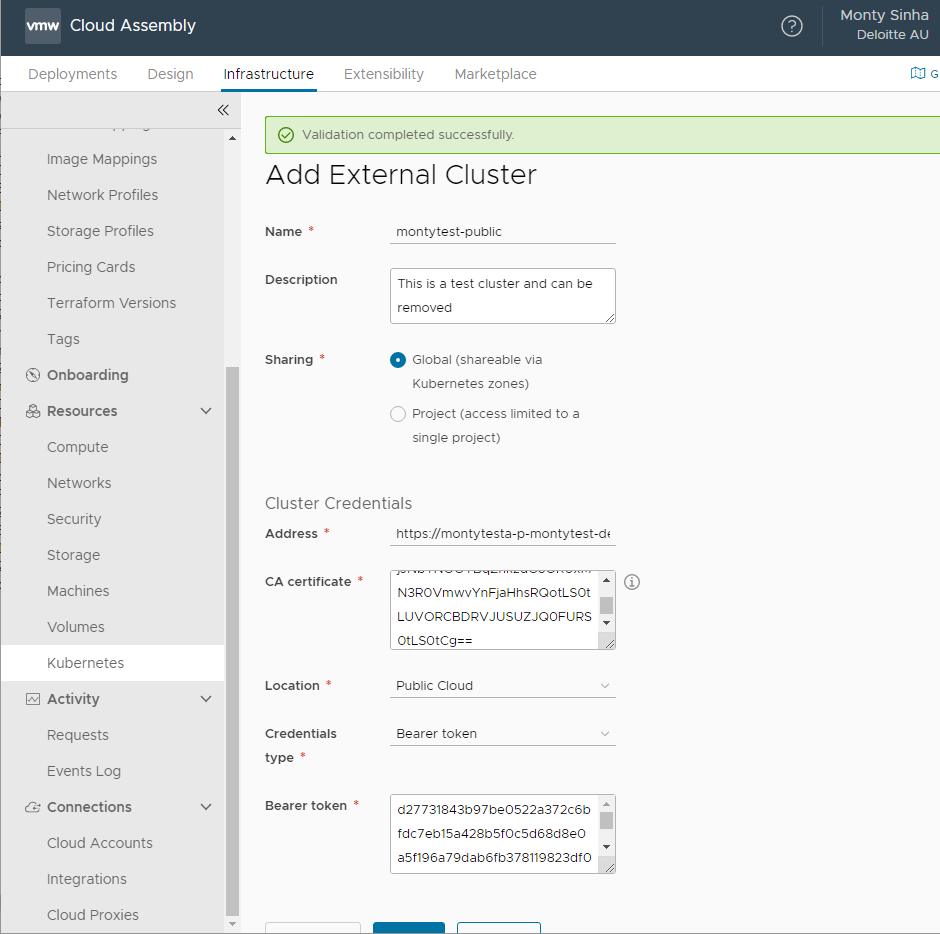

We will input this data into vRA under Infrastructure>Resources>Kubernetes “Add external”

In here we will input the Server Address, CA Cert and Bearer token:

Hit Validate and confirm it can authenticate and then hit OK.

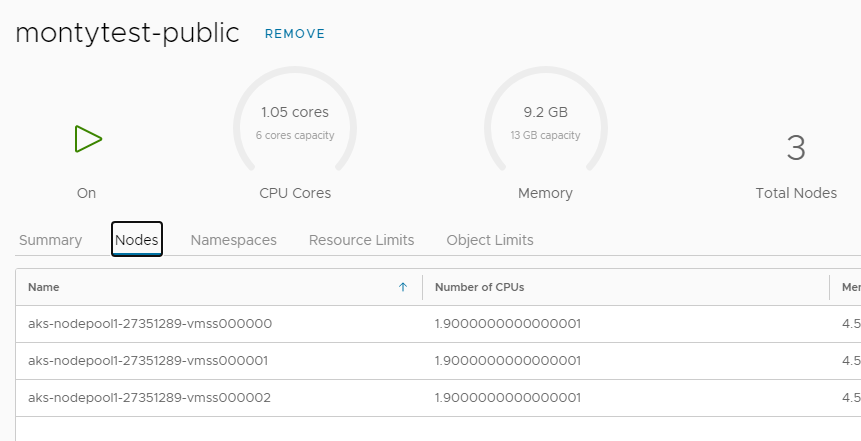

After a few minutes it should start populating existing data about the cluster:

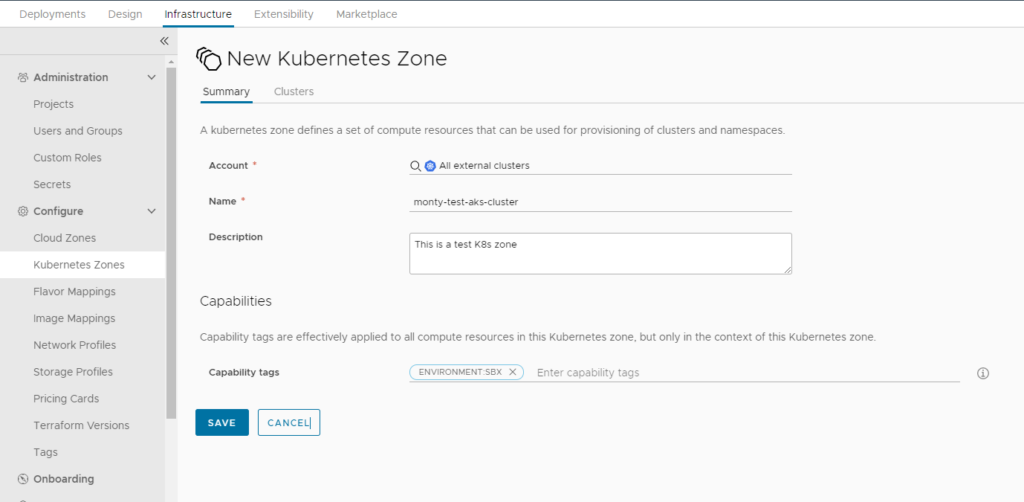

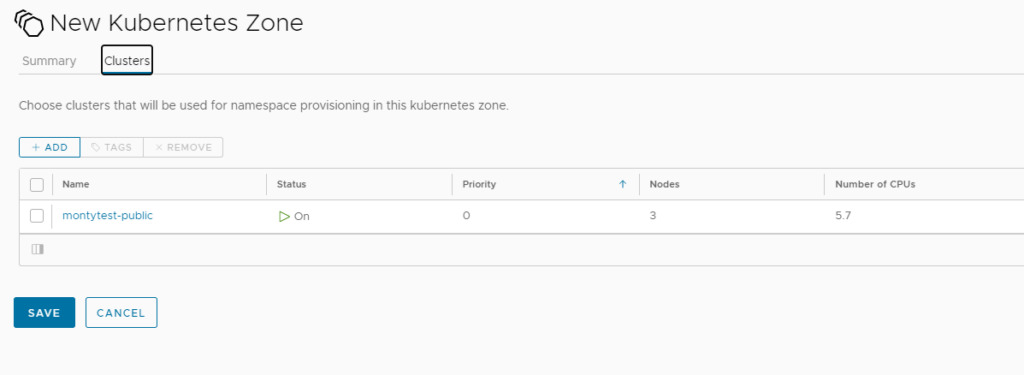

Now we have the resource defined we need to associate it to a Kubernates Cloud Zone soo it can be consumable by the project:

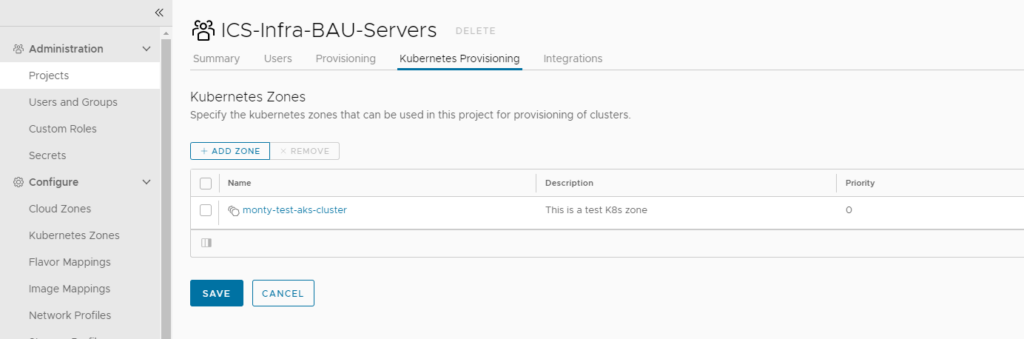

We will also add the cluster to Project:

We are now ready to consume this cluster. At the current stage we can only deploy new namespaces using the blueprint we are going to use the below Cloud Assembly blueprint:

formatVersion: 1

inputs:

ns_name:

type: string

resources:

Cloud_K8S_Namespace_1:

type: Cloud.K8S.Namespace

properties:

name: '${input.ns_name}'

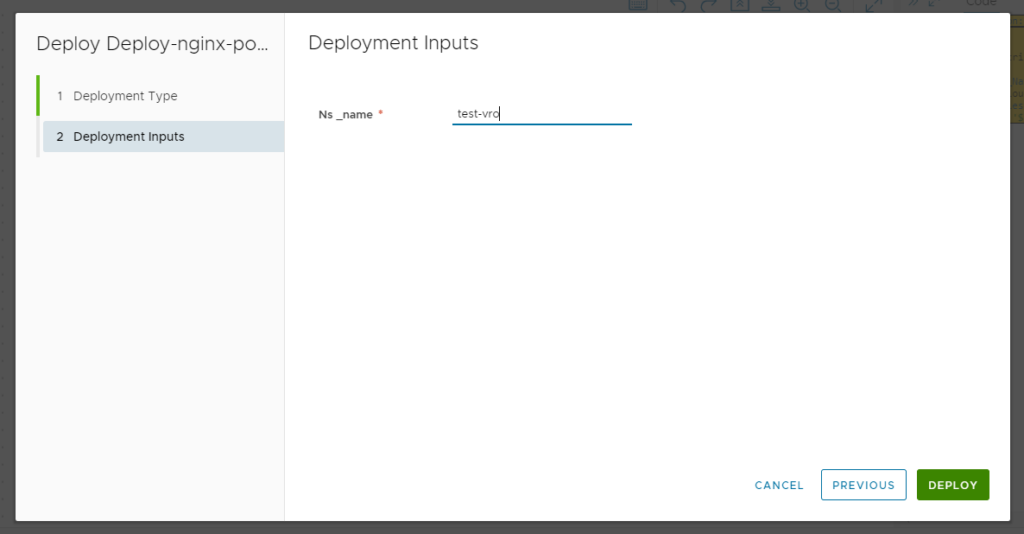

Deploying this blueprint:

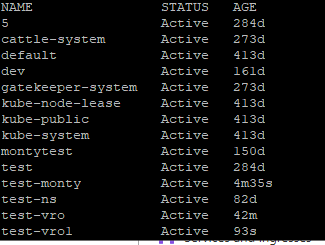

And once complete the NS should be provisioned and ready to use:

This is how you can use VRA to provision Namespaces and allocate them to projects.